This repository contains an easy and intuitive approach to few-shot NER using most similar expansion over spaCy embeddings. Now with entity confidence scores!

Project description

Concise Concepts

When wanting to apply NER to concise concepts, it is really easy to come up with examples, but pretty difficult to train an entire pipeline. Concise Concepts uses few-shot NER based on word embedding similarity to get you going with easy! Now with entity scoring!

Usage

This library defines matching patterns based on the most similar words found in each group, which are used to fill a spaCy EntityRuler. To better understand the rule definition, I recommend playing around with the spaCy Rule-based Matcher Explorer.

Tutorials

-

TechVizTheDataScienceGuy created a nice tutorial on how to use it.

The section Matching Pattern Rules expands on the construction, analysis and customization of these matching patterns.

Install

pip install concise-concepts

Quickstart

import spacy

from spacy import displacy

import concise_concepts

data = {

"fruit": ["apple", "pear", "orange"],

"vegetable": ["broccoli", "spinach", "tomato"],

"meat": ["beef", "pork", "fish", "lamb"],

}

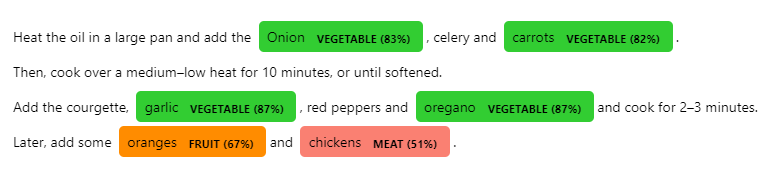

text = """

Heat the oil in a large pan and add the Onion, celery and carrots.

Then, cook over a medium–low heat for 10 minutes, or until softened.

Add the courgette, garlic, red peppers and oregano and cook for 2–3 minutes.

Later, add some oranges and chickens. """

nlp = spacy.load("en_core_web_lg", disable=["ner"])

nlp.add_pipe(

"concise_concepts",

config={

"data": data,

"ent_score": True, # Entity Scoring section

"verbose": True,

"exclude_pos": ["VERB", "AUX"],

"exclude_dep": ["DOBJ", "PCOMP"],

"include_compound_words": False,

"json_path": "./fruitful_patterns.json",

},

)

doc = nlp(text)

options = {

"colors": {"fruit": "darkorange", "vegetable": "limegreen", "meat": "salmon"},

"ents": ["fruit", "vegetable", "meat"],

}

ents = doc.ents

for ent in ents:

new_label = f"{ent.label_} ({ent._.ent_score:.0%})"

options["colors"][new_label] = options["colors"].get(ent.label_.lower(), None)

options["ents"].append(new_label)

ent.label_ = new_label

doc.ents = ents

displacy.render(doc, style="ent", options=options)

Features

Matching Pattern Rules

A general introduction about the usage of matching patterns in the usage section.

Customizing Matching Pattern Rules

Even though the baseline parameters provide a decent result, the construction of these matching rules can be customized via the config passed to the spaCy pipeline.

exclude_pos: A list of POS tags to be excluded from the rule-based match.exclude_dep: A list of dependencies to be excluded from the rule-based match.include_compound_words: If True, it will include compound words in the entity. For example, if the entity is "New York", it will also include "New York City" as an entity.case_sensitive: Whether to match the case of the words in the text.

Analyze Matching Pattern Rules

To motivate actually looking at the data and support interpretability, the matching patterns that have been generated are stored as ./main_patterns.json. This behavior can be changed by using the json_path variable via the config passed to the spaCy pipeline.

Most Similar Word Expansion

Use a specific number of words to expand over.

data = {

"fruit": ["apple", "pear", "orange"],

"vegetable": ["broccoli", "spinach", "tomato"],

"meat": ["beef", "pork", "fish", "lamb"]

}

topn = [50, 50, 150]

assert len(topn) == len

nlp.add_pipe("concise_concepts", config={"data": data, "topn": topn})

Entity Scoring

Use embdding based word similarity to score entities

import spacy

import concise_concepts

data = {

"ORG": ["Google", "Apple", "Amazon"],

"GPE": ["Netherlands", "France", "China"],

}

text = """Sony was founded in Japan."""

nlp = spacy.load("en_core_web_lg")

nlp.add_pipe("concise_concepts", config={"data": data, "ent_score": True, "case_sensitive": True})

doc = nlp(text)

print([(ent.text, ent.label_, ent._.ent_score) for ent in doc.ents])

# output

#

# [('Sony', 'ORG', 0.5207586), ('Japan', 'GPE', 0.7371268)]

Custom Embedding Models

Use gensim.Word2vec gensim.FastText or gensim.KeyedVectors model from the pre-trained gensim library or a custom model path.

data = {

"fruit": ["apple", "pear", "orange"],

"vegetable": ["broccoli", "spinach", "tomato"],

"meat": ["beef", "pork", "fish", "lamb"]

}

# model from https://radimrehurek.com/gensim/downloader.html or path to local file

model_path = "glove-wiki-gigaword-300"

nlp.add_pipe("concise_concepts", config={"data": data, "model_path": model_path})

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

File details

Details for the file concise_concepts-0.7.tar.gz.

File metadata

- Download URL: concise_concepts-0.7.tar.gz

- Upload date:

- Size: 13.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.1 CPython/3.9.15

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | bc78a1f0aaefe3bf54bc5bfa1bf28f17ab76c2bc5af11e3e30f88aa2964ea690 |

|

| MD5 | 9bd9989a30af71d8a65f38e7d4c924db |

|

| BLAKE2b-256 | fcf22c4cc4222421b53b04ac403e571451282ef605956414578e713a532971d7 |

File details

Details for the file concise_concepts-0.7-py3-none-any.whl.

File metadata

- Download URL: concise_concepts-0.7-py3-none-any.whl

- Upload date:

- Size: 13.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.1 CPython/3.9.15

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | 0aafa1fcfeb4c3f5ff047064a07a6953328a2f359edc3b3f38036fa35fe11e40 |

|

| MD5 | 8706426f128f4ffffb0d01ba61608410 |

|

| BLAKE2b-256 | f0cbb09b3d134c785a2ed12cb5bf2c82865f56071207758f6edae2444bd4bced |