A multi-lingual approach to AllenNLP CoReference Resolution, along with a wrapper for spaCy.

Project description

Crosslingual Coreference

Coreference is amazing but the data required for training a model is very scarce. In our case, the available training for non-English languages also proved to be poorly annotated. Crosslingual Coreference, therefore, uses the assumption a trained model with English data and cross-lingual embeddings should work for languages with similar sentence structures.

Install

pip install crosslingual-coreference

Quickstart

from crosslingual_coreference import Predictor

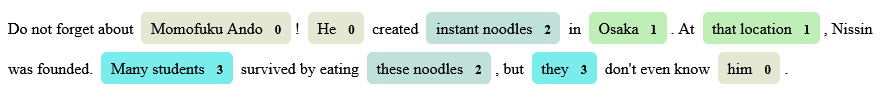

text = (

"Do not forget about Momofuku Ando! He created instant noodles in Osaka. At"

" that location, Nissin was founded. Many students survived by eating these"

" noodles, but they don't even know him."

)

# choose minilm for speed/memory and info_xlm for accuracy

predictor = Predictor(

language="en_core_web_sm", device=-1, model_name="minilm"

)

print(predictor.predict(text)["resolved_text"])

# Output

#

# Do not forget about Momofuku Ando!

# Momofuku Ando created instant noodles in Osaka.

# At Osaka, Nissin was founded.

# Many students survived by eating instant noodles,

# but Many students don't even know Momofuku Ando.

Chunking/batching to resolve memory OOM errors

from crosslingual_coreference import Predictor

predictor = Predictor(

language="en_core_web_sm",

device=0,

model_name="minilm",

chunk_size=2500,

chunk_overlap=2,

)

Use spaCy pipeline

import spacy

import crosslingual_coreference

text = (

"Do not forget about Momofuku Ando! He created instant noodles in Osaka. At"

" that location, Nissin was founded. Many students survived by eating these"

" noodles, but they don't even know him."

)

nlp = spacy.load("en_core_web_sm")

nlp.add_pipe(

"xx_coref", config={"chunk_size": 2500, "chunk_overlap": 2, "device": 0}

)

doc = nlp(text)

print(doc._.coref_clusters)

# Output

#

# [[[4, 5], [7, 7], [27, 27], [36, 36]],

# [[12, 12], [15, 16]],

# [[9, 10], [27, 28]],

# [[22, 23], [31, 31]]]

print(doc._.resolved_text)

# Output

#

# Do not forget about Momofuku Ando!

# Momofuku Ando created instant noodles in Osaka.

# At Osaka, Nissin was founded.

# Many students survived by eating instant noodles,

# but Many students don't even know Momofuku Ando.

Available models

As of now, there are two models available "info_xlm", "xlm_roberta", "minilm", which scored 77, 74 and 74 on OntoNotes Release 5.0 English data, respectively.

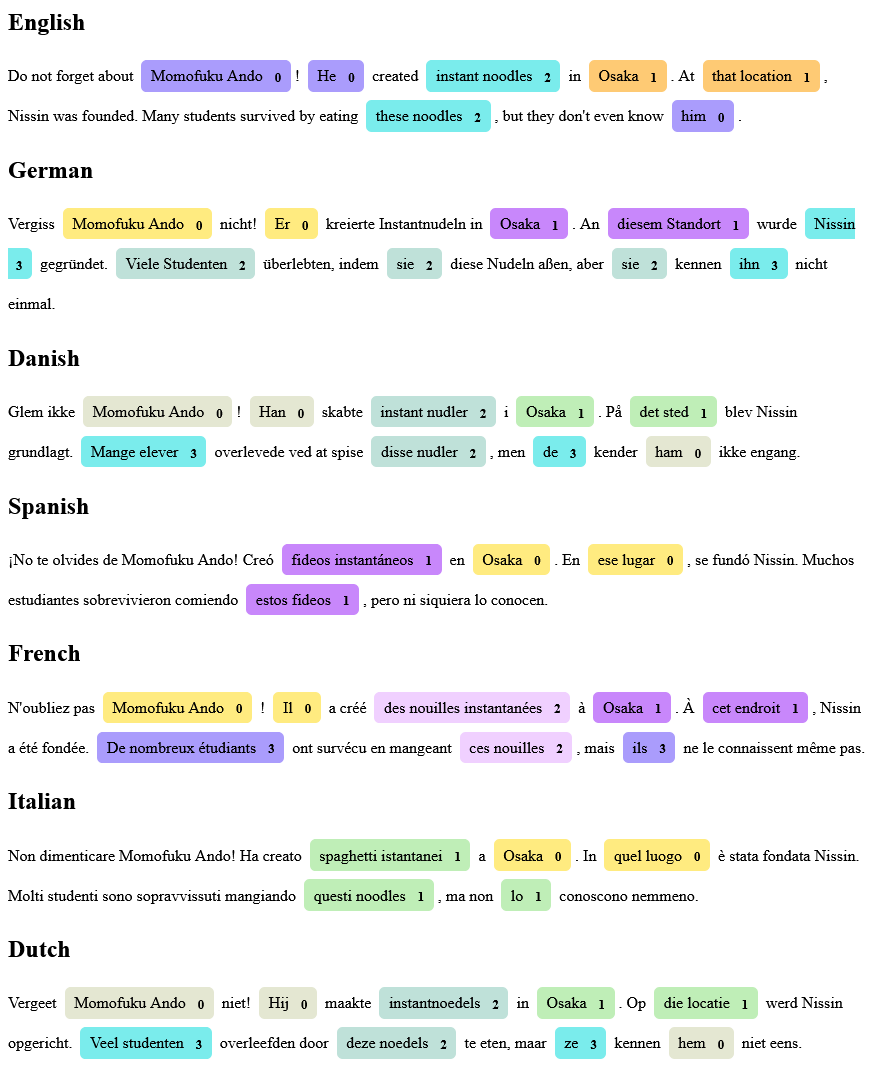

More Examples

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

File details

Details for the file crosslingual-coreference-0.2.3.tar.gz.

File metadata

- Download URL: crosslingual-coreference-0.2.3.tar.gz

- Upload date:

- Size: 10.3 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.9.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | c6bb56dfdca24a4d667c5c41fee1e562f9d3c1cc16bcbe8525990cd4c3114f19 |

|

| MD5 | 9768435258f415327800f1c0c35768db |

|

| BLAKE2b-256 | 5d60565f342532d3d632b4ffe94521a367170b6c3d33a6dfd76111ded52daa02 |

File details

Details for the file crosslingual_coreference-0.2.3-py3-none-any.whl.

File metadata

- Download URL: crosslingual_coreference-0.2.3-py3-none-any.whl

- Upload date:

- Size: 11.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.9.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | b216bd9591bcb91173fcaf945b591d47d0384582d6ab6dab7a37113a84f2b594 |

|

| MD5 | f740807a1164b2f10c103b5740bd28a0 |

|

| BLAKE2b-256 | e09c352141e6f10d3958e7338d23e112f3bc8fd57a9b2ca689fe74b56cbc0bf6 |