No project description provided

Project description

OpusCleaner

OpusCleaner is a machine translation/language model data cleaner and training scheduler. The Training scheduler has moved to OpusTrainer.

Cleaner

The cleaner bit takes care of downloading and cleaning multiple different datasets and preparing them for translation.

opuscleaner-clean --parallel 4 data/train-parts/dataset.filter.json | gzip -c > clean.gz

Installation for cleaning

If you just want to use OpusCleaner for cleaning, you can install it from PyPI, and then run it

pip3 install opuscleaner

opuscleaner-server serve

Then you can go to http://127.0.0.1:8000/ to show the interface.

You can also install and run OpusCleaner on a remote machine, and use SSH local forwarding (e.g. ssh -L 8000:localhost:8000 you@remote.machine) to access the interface on your local machine.

Dependencies

(Mainly listed as shortcuts to documentation)

- FastAPI as the base for the backend part.

- Pydantic for conversion of untyped JSON to typed objects. And because FastAPI automatically supports it and gives you useful error messages if you mess up things.

- Vue for frontend

Screenshots

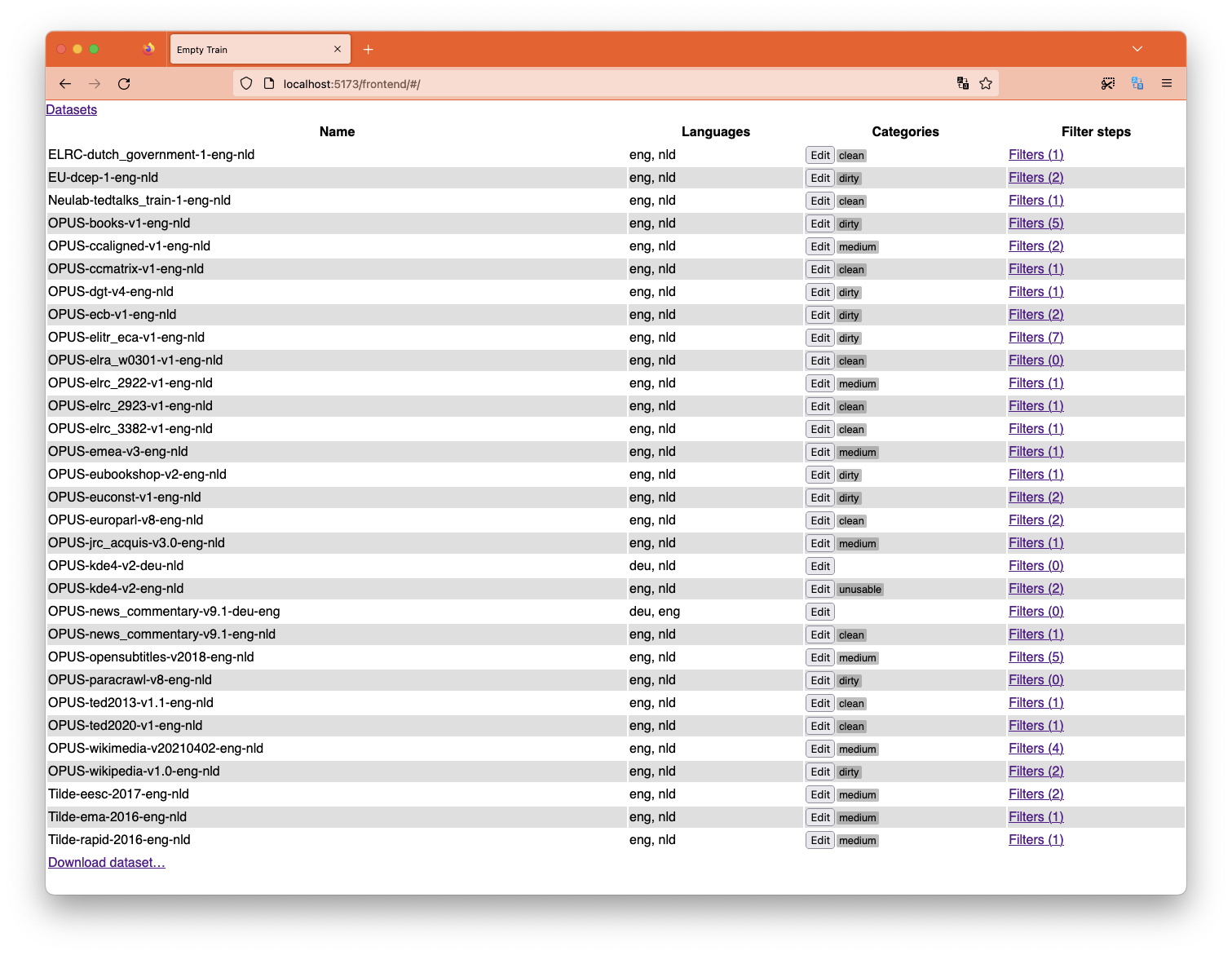

List and categorize the datasets you are going to use for training.

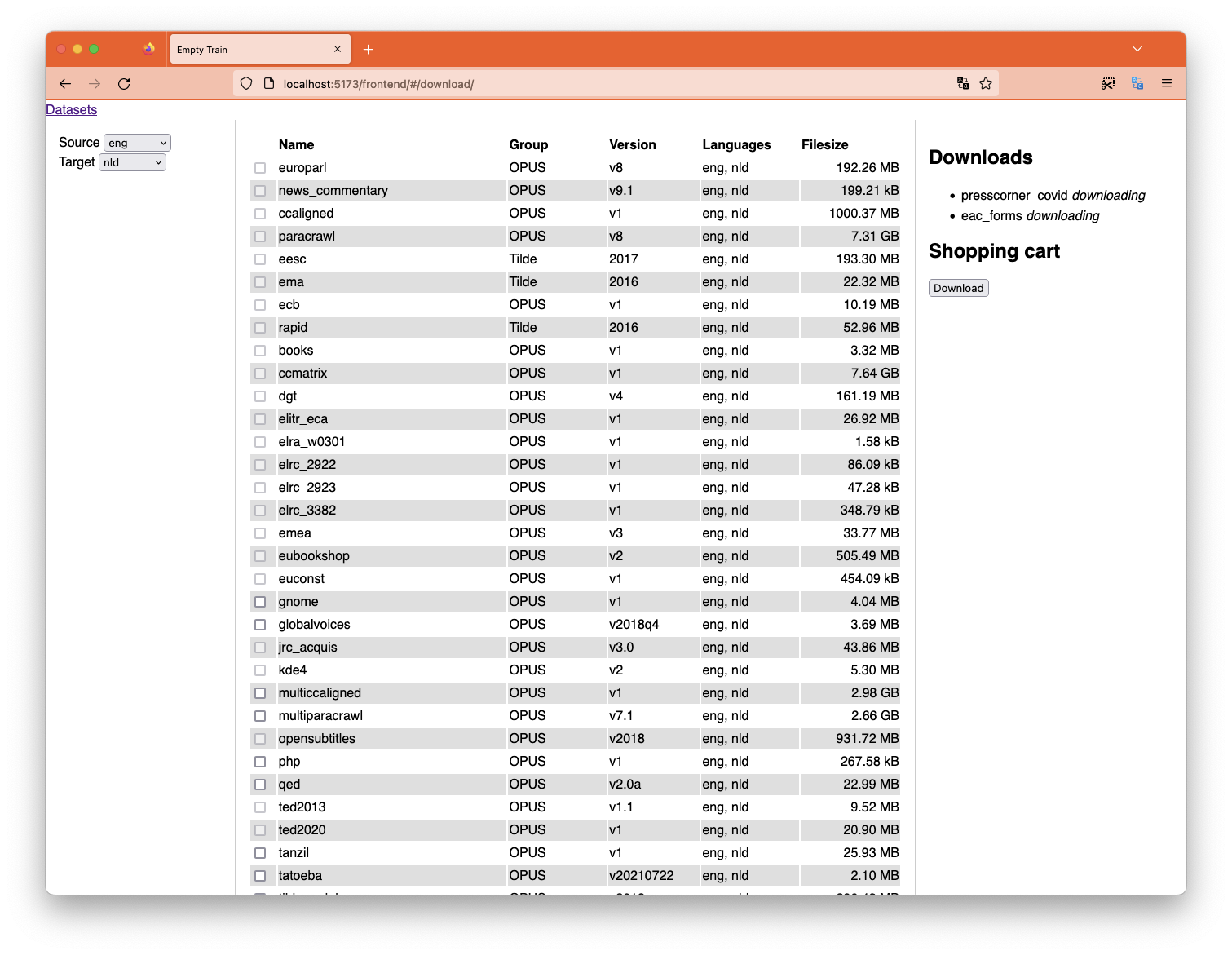

Download more datasets right from the interface.

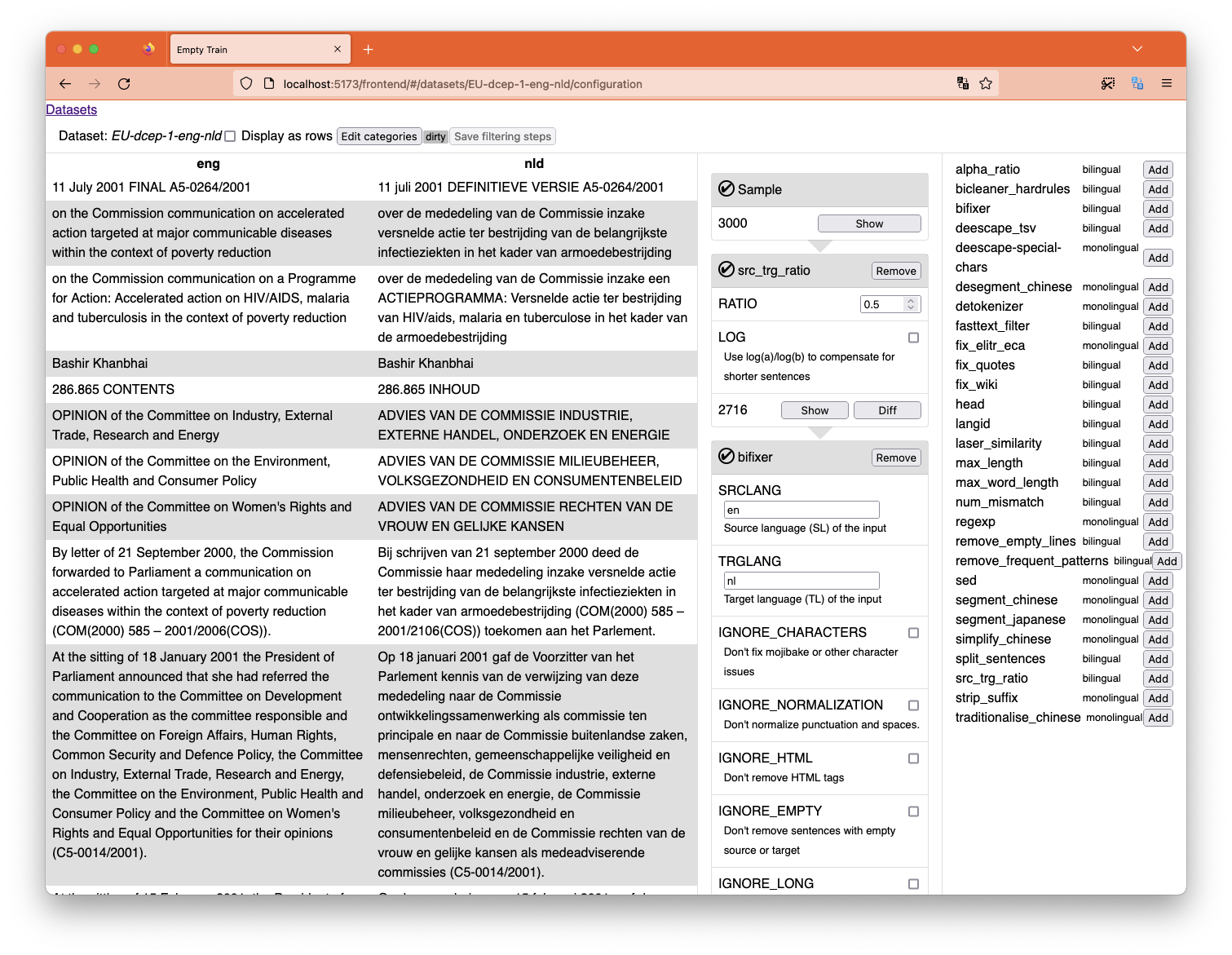

Filter each individual dataset, showing you the results immediately.

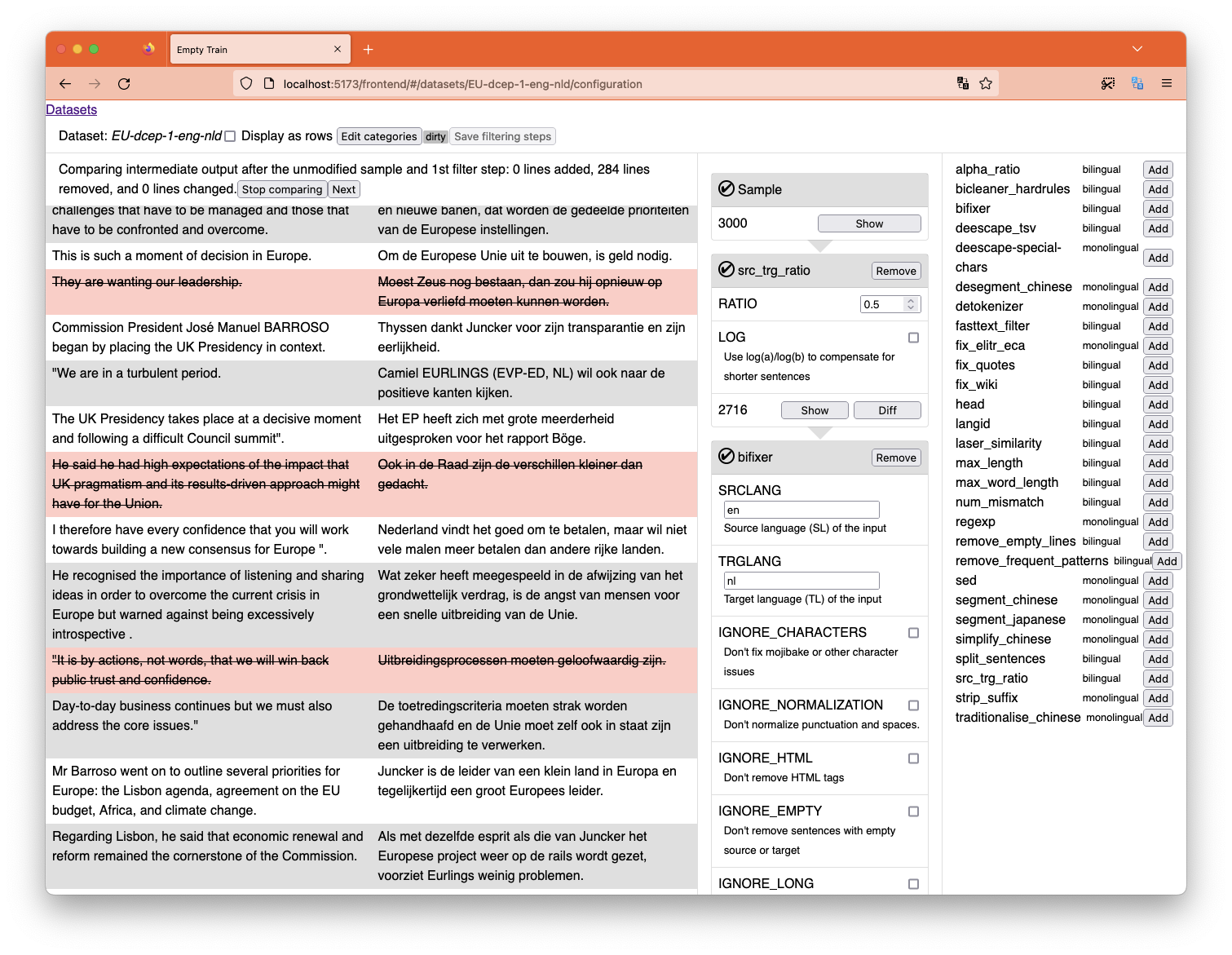

Compare the dataset at different stages of filtering to see what the impact is of each filter.

Using your own data

OpusCleaner scans for datasets and finds them automatically if they're in the right format. When you download OPUS data, it will get converted to this format, and there's nothing stopping you from adding your own in the same format.

By default, it scans for files matching data/train-parts/*.*.gz and will derive which files make up a dataset from the filenames: name.en.gz and name.de.gz will be a dataset called name. The files are your standard moses format: a single sentence per line, and each Nth line in the first file will match with the Nth line of the second file.

When in doubt, just download one of the OPUS datasets through OpusCleaner, and replicate the format for your own dataset.

If you want to use another path, you can use the DATA_PATH environment variable to change it, e.g. run DATA_PATH="./my-datasets/*.*.gz" opuscleaner-server.

Paths

data/train-partsis scanned for datasets. You can change this by setting theDATA_PATHenvironment variable, the default isdata/train-parts/*.*.gz.filtersshould contain filter json files. You can change theFILTER_PATHenvironment variable, the default is<PYTHON_PACKAGE>/filters/*.json.

Installation for development

cd frontend

npm clean-install

npm run build

cd ..

python3 -m venv .env

bash --init-file .env/bin/activate

pip install -e .

Finally you can run opuscleaner-server as normal. The --reload option will cause it to restart when any of the python files change.

opuscleaner-server serve --reload

Then go to http://127.0.0.1:8000/ for the "interface" or http://127.0.0.1:8000/docs for the API.

Frontend development

If you're doing frontend development, try also running:

cd frontend

npm run dev

Then go to http://127.0.0.1:5173/ for the "interface".

This will put vite in hot-reloading mode for easier Javascript dev. All API requests will be proxied to the python server running in 8000, which is why you need to run both at the same time.

Filters

If you want to use LASER, you will also need to download its assets:

python -m laserembeddings download-models

Packaging

Run npm build in the frontend/ directory first, and then run hatch build . in the project directory to build the wheel and source distribution.

To push a new release to Pypi from Github, tag a commit with a vX.Y.Z version number (including the v prefix). Then publish a release on Github. This should trigger a workflow that pushes a sdist + wheel to pypi.

Acknowledgements

This project has received funding from the European Union’s Horizon Europe research and innovation programme under grant agreement No 101070350 and from UK Research and Innovation (UKRI) under the UK government’s Horizon Europe funding guarantee [grant number 10052546]

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

File details

Details for the file opuscleaner-0.4.1.tar.gz.

File metadata

- Download URL: opuscleaner-0.4.1.tar.gz

- Upload date:

- Size: 307.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.0.0 CPython/3.9.18

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | 4b9f443581049b82ef23b7d4e36d4f9ea0fc7cf2c877b38c48fdebe46345340e |

|

| MD5 | aa08c3e43dd8857de631d6fd0e7a7ded |

|

| BLAKE2b-256 | f3291f35304c917fa4856119252fc8b7a7dabc0bf67ab9fee245c8e08c03d971 |

File details

Details for the file opuscleaner-0.4.1-py3-none-any.whl.

File metadata

- Download URL: opuscleaner-0.4.1-py3-none-any.whl

- Upload date:

- Size: 340.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.0.0 CPython/3.9.18

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | 7b0b76f2e73deb1293e916804cb4578cbb5d1379ab456ceb5ed24ccb872bed5a |

|

| MD5 | ad46aef37d9c783ea152d00e48f7d6f2 |

|

| BLAKE2b-256 | 1677fe4d499b8a9810949a0718be89467093a70c59665f72e963be16b0fba422 |