A Pytorch implementation of Proximal Policy Optimization for transfomer language models.

Project description

TRL - Transformer Reinforcement Learning

Train transformer language models with reinforcement learning.

What is it?

With trl you can train transformer language models with Proximal Policy Optimization (PPO). The library is built on top of the transformers library by 🤗 Hugging Face. Therefore, pre-trained language models can be directly loaded via transformers. At this point most of decoder architectures and encoder-decoder architectures are supported.

Highlights:

PPOTrainer: A PPO trainer for language models that just needs (query, response, reward) triplets to optimise the language model.AutoModelForCausalLMWithValueHead&AutoModelForSeq2SeqLMWithValueHead: A transformer model with an additional scalar output for each token which can be used as a value function in reinforcement learning.- Example: Train GPT2 to generate positive movie reviews with a BERT sentiment classifier.

How it works

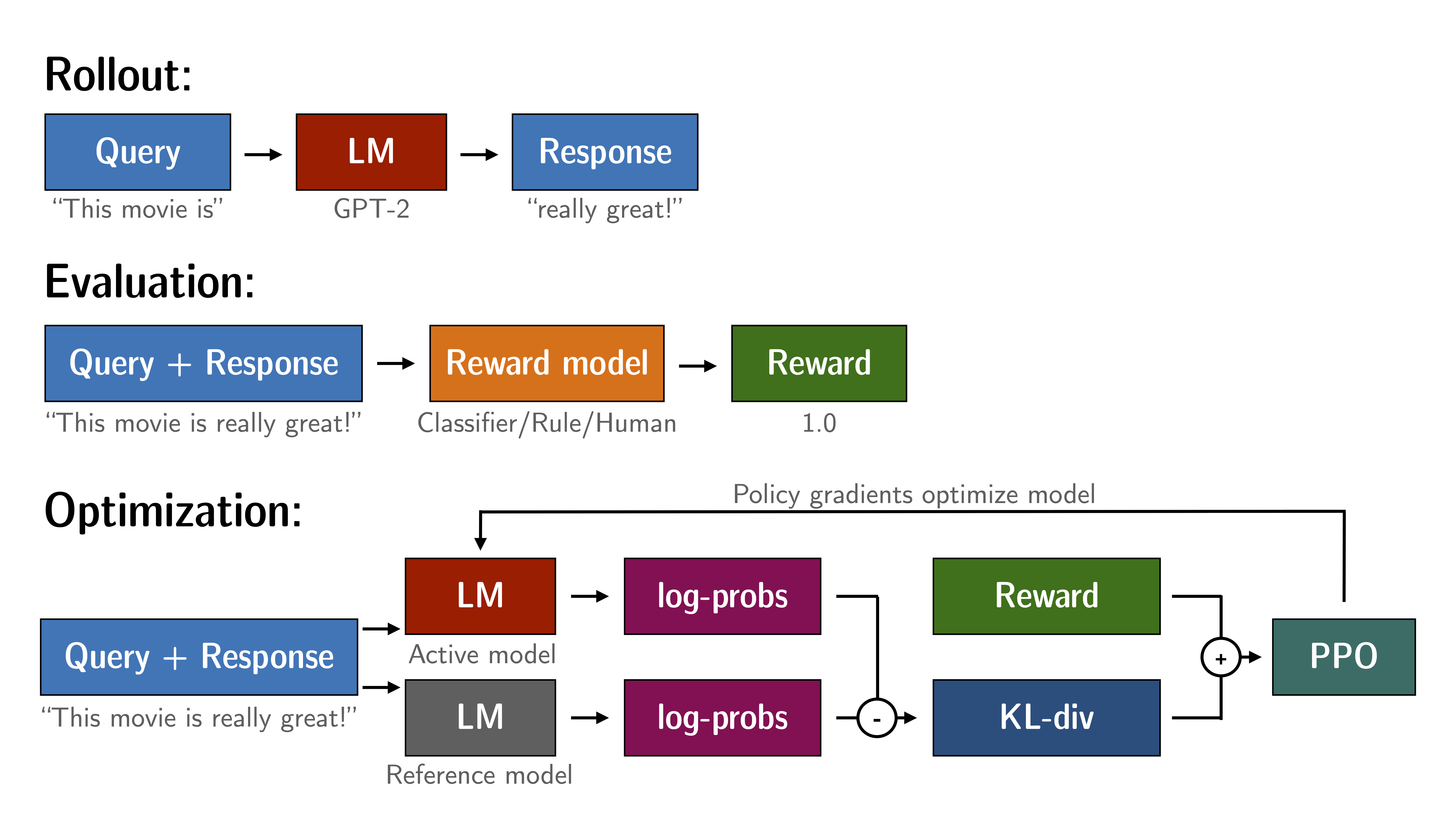

Fine-tuning a language model via PPO consists of roughly three steps:

- Rollout: The language model generates a response or continuation based on query which could be the start of a sentence.

- Evaluation: The query and response are evaluated with a function, model, human feedback or some combination of them. The important thing is that this process should yield a scalar value for each query/response pair.

- Optimization: This is the most complex part. In the optimisation step the query/response pairs are used to calculate the log-probabilities of the tokens in the sequences. This is done with the model that is trained and and a reference model, which is usually the pre-trained model before fine-tuning. The KL-divergence between the two outputs is used as an additional reward signal to make sure the generated responses don't deviate to far from the reference language model. The active language model is then trained with PPO.

This process is illustrated in the sketch below:

Figure: Sketch of the workflow.

Installation

Python package

Install the library with pip:

pip install trl

From source

If you want to run the examples in the repository a few additional libraries are required. Clone the repository and install it with pip:

git clone https://github.com/lvwerra/trl.git

cd trl/

pip install .

If you wish to develop TRL, you should install in editable mode:

pip install -e .

How to use

Example

This is a basic example on how to use the library. Based on a query the language model creates a response which is then evaluated. The evaluation could be a human in the loop or another model's output.

# imports

import torch

from transformers import AutoTokenizer

from trl import PPOTrainer, PPOConfig, AutoModelForCausalLMWithValueHead, create_reference_model

from trl.core import respond_to_batch

# get models

model = AutoModelForCausalLMWithValueHead.from_pretrained('gpt2')

model_ref = create_reference_model(model)

tokenizer = AutoTokenizer.from_pretrained('gpt2')

# initialize trainer

ppo_config = PPOConfig(

batch_size=1,

)

# encode a query

query_txt = "This morning I went to the "

query_tensor = tokenizer.encode(query_txt, return_tensors="pt")

# get model response

response_tensor = respond_to_batch(model_ref, query_tensor)

# create a ppo trainer

ppo_trainer = PPOTrainer(ppo_config, model, model_ref, tokenizer)

# define a reward for response

# (this could be any reward such as human feedback or output from another model)

reward = [torch.tensor(1.0)]

# train model for one step with ppo

train_stats = ppo_trainer.step([query_tensor[0]], [response_tensor[0]], reward)

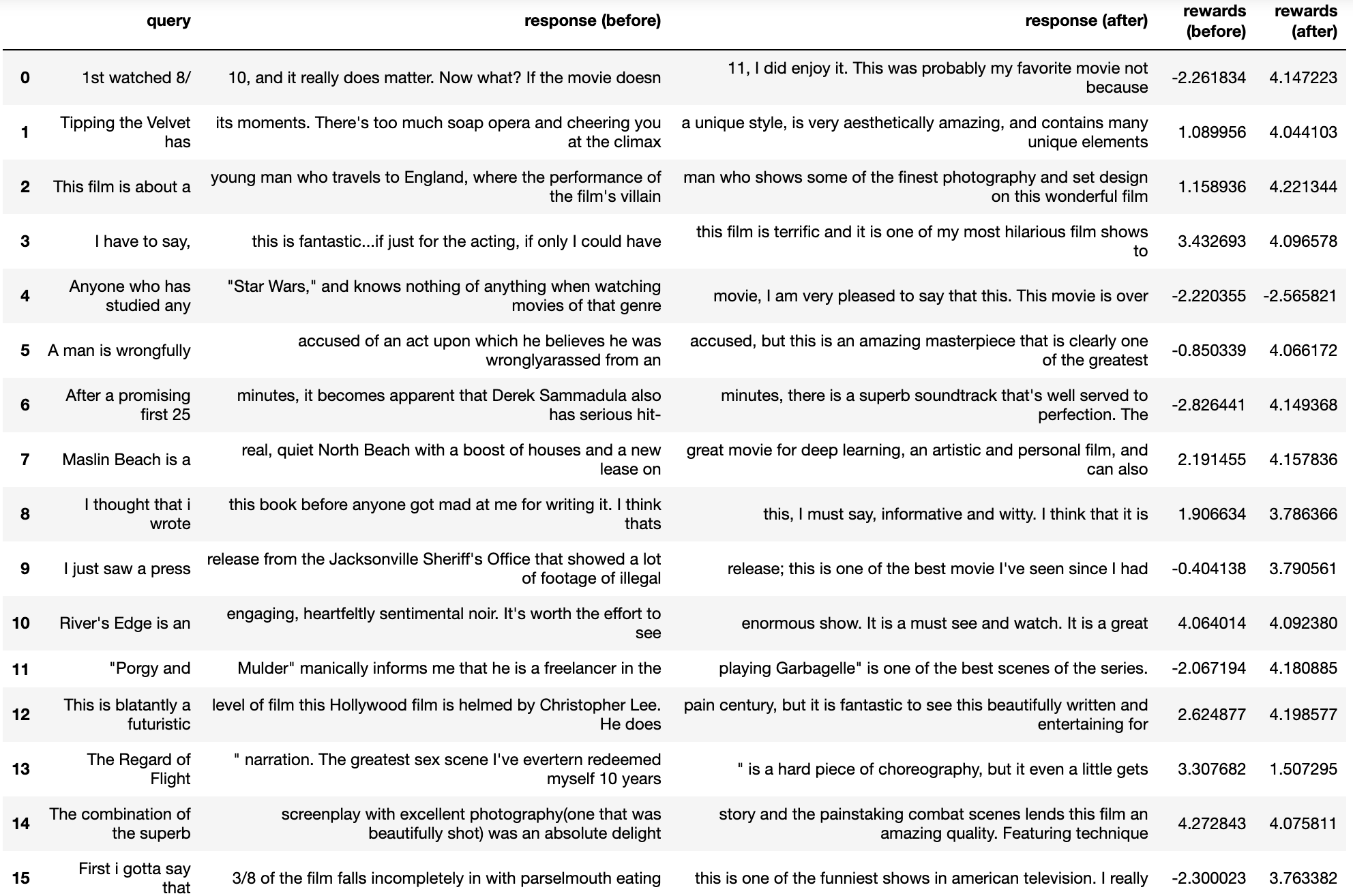

Advanced example: IMDB sentiment

For a detailed example check out the example python script examples/scripts/ppo-sentiment.py, where GPT2 is fine-tuned to generate positive movie reviews. An few examples from the language models before and after optimisation are given below:

Figure: A few review continuations before and after optimisation.

References

Proximal Policy Optimisation

The PPO implementation largely follows the structure introduced in the paper "Fine-Tuning Language Models from Human Preferences" by D. Ziegler et al. [paper, code].

Language models

The language models utilize the transformers library by 🤗 Hugging Face.

Citation

@misc{vonwerra2022trl,

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert},

title = {TRL: Transformer Reinforcement Learning},

year = {2020},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/lvwerra/trl}}

}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

File details

Details for the file trl-0.3.0.tar.gz.

File metadata

- Download URL: trl-0.3.0.tar.gz

- Upload date:

- Size: 39.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.9.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | 129279e5b04c4facc0f15915bdbe3bb873fcd078bdf289d77dc7b770a5a7bc5e |

|

| MD5 | 1fabe0e0d2c1e0fff74741008fdae20e |

|

| BLAKE2b-256 | 45f4f6cd20293c79b23f98be72847383ed17ad826e714a35b2ffdfee0841813b |

File details

Details for the file trl-0.3.0-py3-none-any.whl.

File metadata

- Download URL: trl-0.3.0-py3-none-any.whl

- Upload date:

- Size: 43.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.9.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | f5cfe5765565cb72600302e1adcbd8b84341d73f3e159abadcd45bbc064c8855 |

|

| MD5 | 77af852640a6b1a3ff58763337684fcb |

|

| BLAKE2b-256 | a1b35264de6ee5f015a6797ccc9b1d1003edd2209861c4cedb4ad5b352ff86fd |